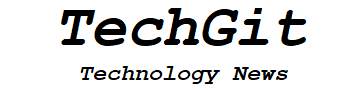

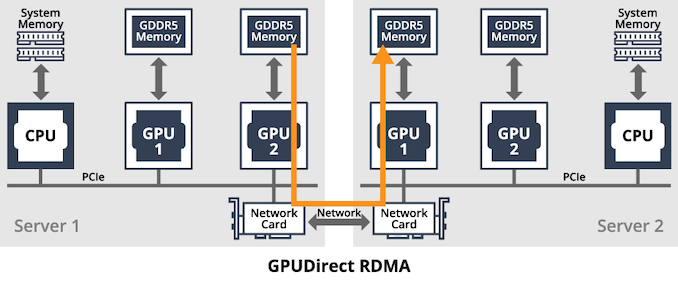

One of the interesting elements about NVIDIA’s A100 card is the potential compute density offered, especially for AI applications. There is set to be a strong rush to enable high-density AI platforms that can take advantage of all the new features that A100 offers in the PCIe form factor, and GIGABYTE was the first in my inbox with news of its new G492 server systems, built to take up to 10 new A100 accelerators. These machines are built on AMD EPYC, which allows for PCIe Gen4 support, as well as offering GPU-to-GPU direct access, direct transfers, and GPUDirect RDMA.

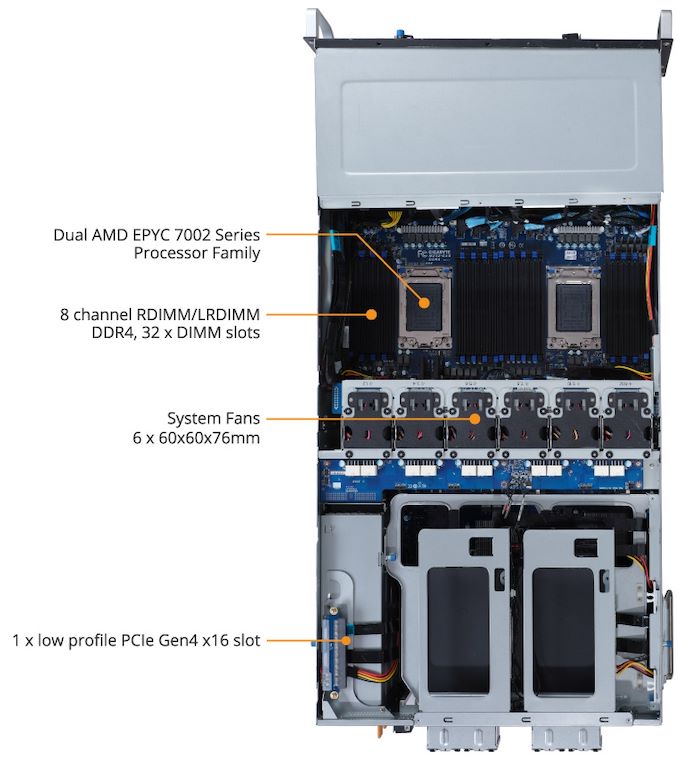

The G492 servers use dual EPYC CPUs, allowing for 128 PCIe 4.0 lanes in total, however in order to expand support to 10 GPUs as well as up to 12 additional NVMe storage drives, PCIe 4.0 switches are used (Broadcom PEX9000 in the G492-Z51, Microchip in the G492-Z50). This also allows an additional three PCIe x16 links and an OCP 3.0 slot for add-on upgrade cards for SAS drives or networking, such as Ethernet or Mellanox Infiniband.

The use of dual EPYC CPUs, up to 280W each, also allows for up to 8 TiB of DDR4-3200 memory support. The system comes with three 2200W 80 PLUS Platinum redundant power supplies. The 10 GPU slots are rated for 250W TDP a piece, and the system comes equipped with dual 10 GBase-T and AST2500 management as standard.

Gigabyte customers interested in deploying G492 should get in contact with their local distributor.

Source: GIGABYTE